Introduction of Data Mining Algorithms

In case you have not heard the buzz word data mining algorithms yet, it would be great to have a bit of discussion about “data mining” prior to learning the data mining algorithms. In this particular tutorial, we are going to learn about the numerous solutions used for Data Extraction. As we are aware that data mining algorithms are an idea of extracting useful information from a great amount of data, several strategies and procedures are applied to sizable sets of data to extract useful information.

As the future of big data analytics is growing exponentially, you should grab the opportunity.

These strategies are essential in the sort of methods plus algorithms put on to data sets. Several of the data mining algorithms strategies include Apriori Algorithm, Statistical Procedure Based Approach, Machine Learning-Based Approach, Neural Network, Classification Algorithms in Data Mining, ID3 Algorithm, C4.5 Algorithm, K Nearest Neighbors Algorithm, Naïve Bayes Algorithm, SVM Algorithm, J48 Decision Trees, etc.

What is Data Mining?

The data mining concept has been there for a long time. It is the process of classifying data through massive volumes of large sets of data to identify and uncover patterns, relationships, and other valuable information for business intelligence that can help organizations to solve various issues, reduce risks, and explore new opportunities.

Data mining solutions and tools make it possible for enterprises to forecast future trends and make more-informed business decisions. Data mining is a vital component of data analytics overall and among the primary disciplines in data science, and that makes use of advanced analytics methods to find useful information in data sets.

At a more granular level, data mining is a stage in the Knowledge Discovery of Databases (KDD) method, a data science strategy for gathering, processing & analyzing information. Data mining and KDD are often referred to interchangeably, however, they are generally viewed as distinct items.

Data has grown to be an element of every facet of business and life. Businesses nowadays could harness data-mining programs and machine learning for almost everything right from boosting their sales operations to interpreting financials for investment applications. Consequently, data scientists are becoming crucial to organizations around the globe as businesses seek to attain bigger goals than ever before.

Types of Data Mining

There are the following types of data mining:

Relational Database

A relational database is a set of several data sets formally structured by tables, records, and columns from which information could be used in numerous ways without needing to understand the database tables. Tables convey and share info, which facilitates data discovery, reporting, and enterprises.

Data Warehouses

A Data Warehouse collects the information through a variety of sources within the organization to offer significant business insights. The great volume of data originates from several instances including Finance and Marketing. The extracted information is used for analytical purposes & helps in decision-making for a company or organization. The data warehouse is created for the analysis of information as compared to transaction processing.

Data Repositories

The data Repository typically represents a place for data storage. Nevertheless, a lot of IT experts use the expression quite a bit more plainly to refer to a certain kind of setup within an IT framework. For instance, a group of databases, where an institution has maintained different sorts of information.

Object-Relational Database

A fusion of an object-oriented database model along with a relational database model is referred to as an object-relational model. It supports Classes, Inheritance, Objects, etc.

Among the major goals of the Object-relational data, the model is to close the gap involving the Relational database and the object-oriented model practices. That is often used in numerous programming languages, for instance, C++, Java, C#, etc.

Transactional Database

A transactional database means a database management system (DBMS) that can undo a database transaction in case it’s not carried out properly. Although this was a distinctive capability a pretty long while back, nowadays, the majority of the relational database systems support transactional database tasks.

What are Data Mining Algorithms?

A data mining algorithms are a set of heuristics plus computations that generates a model from data. To generate a model, the algorithm initially analyzes the data you supply, hunting for particular kinds of trends. or patterns.

The algorithm makes use of the results of this particular evaluation over most iterations to discover the ideal parameters for producing the mining model. These parameters are then used across the overall data set to draw out actionable patterns and detailed stats. The mining model that an algorithm generates through the data can take a variety of forms, including:

- A set of clusters that illustrate how the instances in a data set are associated

- A decision tree that predicts a result, and also explains how distinct criteria impact that final result

- A mathematical model which forecasts sales

A set of rules that explain how products are grouped collectively within a transaction. In addition to the probability that products are bought together. Data Mining Algorithms are a specific class of algorithms helpful for analyzing data and improving data models to determine purposeful patterns. These are a component of machine learning data mining algorithms. These data mining algorithms are carried out through various programming languages like R language, Python, in addition to data mining algorithms tools to derive the enhanced data models.

Several of the widely used data mining algorithms are C4.5 for decision trees, K-means for cluster information evaluation, Support Vector Mechanism Data Mining Algorithms, Naive Bayes Algorithm, The Apriori algorithm for the time series data mining. These data mining algorithms are elements of data analytics applications for organizations. These data mining algorithms are based upon mathematical and statistical formulas applied to the data set.

Why Algorithms Used In Data Mining?

With a massive amount of information being saved every single day, businesses now are curious about learning the trends from them. The data extraction strategies assist in transforming the raw information into valuable information. To mine a large amount of data, the software application is essential as it’s extremely hard for a real human to personally go through the huge volume of information.

A data mining algorithms software program analyses the association between distinct objects in huge databases that can assist in the deci

sion-making procedure. It also aids to learn more about clients, craft advertising and marketing tactics, boost sales and minimize the costs.

Here, are a few reasons which provide the answer to the use of Data Mining Algorithms:

- In the present-day world of “big data“, a significant database is turning out to be the norm. Just think about the idea that there is a database present with lots of terabytes.

- Facebook on its own crunches 600 terabytes of fresh information daily. Furthermore, the key problem of big data is the right way to make perfect sense of it.

- In addition to that, the incredible volume isn’t the only issue. At the same time, big data need to be diverse, unstructured,d and rapidly changing. Think of audio and video information, social media blog posts, geospatial data, or 3D data. This specific sort of data is not readily classified or even organized.

- Additionally, to meet this kind of difficult task, a range of automated strategies for extracting information.

Data Mining Algorithms

Let us now look at various data mining algorithms:

Apriori Data Mining Algorithms

Finding out relation rules is how an Apriori algorithm operates. In the Apriori algorithm, data mining association rules are a kind of data mining algorithms process that is used to determine precisely how variables in a database are associated. After understanding the relationship guidelines, they are put on to a database with a significant amount of transactions.

The Apriori algorithm is a form of unsupervised learning strategy that is required to find mutual links and intriguing patterns. Even though the strategy is quite effective, it uses a great deal of memory, takes up a whole lot of disc space, and also requires quite a long time to operate.

Statistical Procedure Based Approach Data Mining Algorithms

You can find 2 major phases present to focus on classification. That can quickly recognize the statistical community. The next, the “modern” stage concentrated on much more adaptable classes of styles. In which some of which attempt needs to take. Which offers an estimation of the joint division of the feature within every category. That can, consequently, supply a classification rule.

Generally, statistical methods have to be characterized with a precise basic probability model. Which used to make a likelihood of being in each category rather than only a classification. Additionally, we can assume that methods are going to be used by statisticians. Hence some human participation has to think about varying selection. Also, transformation and general structuring of the issue.

Machine Learning-Based Approach Data Mining Algorithms

In general, it covers instant computing methods. Which was based on binary or logical operations. The application to find out a process from several examples. Here, we’ve to concentrate on decision tree approaches. As classification results are derived from a sequence of reasonable steps. These classification results can represent the most complicated issue given. Such as genetic data mining algorithms, as well as inductive logic methods (I.LP.), are presently under energetic improvement.

Additionally, its idea would allow us to contend with much more basic data types like cases. In which the amount, and style of attributes, might vary. This strategy is designed to produce classifying expressions. That’s simply adequate to understand by the man. And should mimic human reasoning to offer insight directly into the decision process. Like statistical methods, background knowledge could be used in development. Though the operation is assumed with no human interference.

Neural Network Data Mining Algorithms

The area of Neural Networks has arisen by using several sources. That’s ranging from understanding and emulating a person’s brain to broader problems. That’s copying human capabilities like use and speech in different areas. Like banking contained a classification program to categorize information as normal or intrusive. Generally, neural networks are made up of levels of interconnected nodes. Each node produces a non-linear feature of its input. And feedback to a node can come from other nodes or perhaps straight from the entered information. Additionally, several nodes are determined with the output of this community.

On the foundation of this, you will find various uses for neural networks contained. That includes recognizing patterns and making small choices about them. In airplanes, we can utilize a neural network as a standard autopilot. In which enter units read signals from the different instruments and output devices. Changing the plane’s controls properly helps keep it steady on the course. Inside a factory, we can utilize neural networking for quality management.

Classification Algorithms in Data Mining

It’s among the data mining algorithms that are used to evaluate a certain data set and requires every instance of it. It assigns this particular example to a specific category. Such a classification mistake is going to be the least. It’s used to draw out models. That defines significant data classes within the specified data set. Classification is a two-step process.

During the very first step, the unit is produced by using a classification algorithm. That’s on the instruction data set. After that within the next step, the extracted airer is tested against a predefined examination data set. That’s measuring the model trained accuracy and performance. So the distinction is the task to assign a category label from an information set whose class label is unknown.

ID3 Algorithm

These Data Mining algorithms begin with the first set because of the root hub. On each cycle, it stresses through every unused feature of the set as well as figures. That is the entropy of the feature. At that stage choose the attribute. Which has probably the smallest entropy value. The set is S then split through the selected attribute to create subsets of the info. These Data Mining algorithms move to recurse every product in a subset.

Additionally, considering just gadgets never selected before. Recursion on a subset might bring to a stop in one of those cases.

- Every component within the subset is in the hands of the same class (+ or perhaps -), then the node is spun into a leaf and labeled with the category of the examples.

- If there aren’t any more attributes to choose though the examples continue to don’t belong to the same category. Then the node is spun into a leaf and marked with the most typical category of the examples in this subset.

- If there are absolutely no examples within the subset, then this occurs. Whenever the parent set is discovered to be matching a certain value of the selected feature.

- For instance, if there was absolutely no instance corresponding with marks > =100. Then a leaf is produced and is labeled with the most typical category of the examples within the parent set.

Working measures of Data Mining Algorithms is as follows:

- Calculate the entropy for every attribute making use of the information set S.

- Split the set S directly into subsets with the attribute for what entropy is minimum.

- Create a choice tree node containing that feature in a dataset.

- Recurse on each part of subsets using around attributes.

C4.5 Data Mining Algorithms

C4.5 is among the most crucial Data Mining algorithms, used to develop a decision tree that is a development of prior ID3 computation. It improves the ID3 algorithm. That’s by managing both discrete and continuous properties, lacking values. The decision trees made by C4.5. which use for grouping and are usually called statistical classifiers.

C4.5 creates decision trees originating from a set of instruction information in the same fashion as being an Id3 algorithm. As it’s a supervised mastering algorithm it needs a set of instruction examples. That may seem like a pair: input object and the preferred output worth (class). The algorithm analyzes the instruction set and creates a classifier. That needs to have the capability to effectively organize both knowledge and test cases.

A test example is an input object as well as the algorithm that should predict a production printer. Think about the sample education information set S=S1, S2,…Sn that is currently classified. Each sample Si is made up of a function vector (x1,i, x2,i, …, xn,i). Where xj represents features or attributes of the sample.

The class where Si falls. At every node of this tree, C4.5 selects a single feature of this information. Most effectively splits its pair of samples directly into subsets like that it results in a single category or even the other group. The splitting quality is the normalized info gain. That’s a non-symmetric measure of the impact. The attribute with probably the highest info gain is selected to create the choice.

General working measures of these data mining algorithms is as follows:

- Think all of the samples in the summary belong to the very same category. If it’s true, it causes a leaf node with the decision tree so that a specific category will be selected.

- Not any of the characteristics offer some info gain. If it’s true, C4.5 creates a choice node to increase up the tree making use of the expected valuation of the class.

- An instance of a previously unseen class encountered. Then, C4.5 creates a choice node to increase up the tree making use of the expected value.

K Nearest Neighbors Data Mining Algorithms

The closest neighbor rule distinguishes the distinction of an unknown data point. That’s on the foundation of its closest neighbor whose category is currently known. M. Cover as well as P. E. Hart purpose k nearest neighbor (KNN). In what nearest neighbor is computed on the foundation of the opinion of k. Which indicates the number of nearest neighbors is considered to characterize. It uses far more than 1 closest neighbor to figure out the class. To which the specified information issue belongs to and so it’s called KNN. These information samples are necessary to have the memory at the runtime. Hence they’re described as memory-based techniques.

KNN is centered on weights, the training factors are assigned weights. According to the distances of theirs from sample information points. But at the exact same, computational complexity and memory needs remain the main concern. In order to conquer the memory limitation size of data, the set is reduced. For this, the repeated patterns. Which do not include extra details can also be removed from the instruction data set.

To further improve the info concentrates which do not influence the result. That is also eliminated from the instruction data set. The NN instruction data set could organize utilizing systems that are different. That’s enhancing the over memory cap of KNN. The KNN setup is able to be done using heel tree, k d tree, and then orthogonal research tree.

The tree-structured training information is further divided into techniques and nodes. Such as NFL along with tunable metrics break down the training information set based on planes. Making use of these data mining algorithms we are able to grow the pace of the elementary KNN data mining algorithms. Consider that an item is sampled with a set of various characteristics.

Assuming the group is able to figure out from its attributes. Additionally, various data mining algorithms are utilized to automate the classification procedure. In pseudo-code, k nearest neighbor algorithm is able to express,

K ← selection of nearest neighbors

For every object Xin, the check set do

compute the distance D(X, Y) involving X as well as each object Y in the coaching set

the neighborhood ← the k friends and neighbors in the coaching set closest to X

X.class ← SelectClass (neighborhood)

Naïve Bayes Data Mining Algorithms

The Naive Bayes Classifier method is founded on the Bayesian theorem. It’s especially used once the dimensionality of the inputs is rather high. The Bayesian Classifier can calculate the potential output. That’s depending on the input. It’s also easy to include brand new raw data at runtime and also have a much better probabilistic classifier.

This classifier thinks of the presence of a specific characteristic of a category. That’s not related to the existence of any additional feature when the category variable is given. For instance, fruit might be thought to be an apple in case it’s red, round. Even when these characteristics depend on each other functions of a category. A naive Bayes classifier thinks all of these attributes help the probability. It exhibits this fruit as an apple. The algorithm works as follows,

Bayes theorem offers a method of calculating the posterior likelihood, P(c|x), coming from P(c), P(x), as well P(x|c). Naive Bayes classifier thinks the outcome of the importance of a predictor (x) on a certain category (c). That’s independent of the values of various other predictors.

- P(c|x) is the posterior probability of training (target) provided predictor (attribute) of training.

- P(c) is known as the previous probability of class.

- P(x|c) is the likelihood that is the likelihood of the predictor of the given category.

- P(x) is the previous likelihood of a predictor of training.

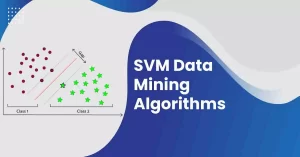

SVM Data Mining Algorithms

SVM has captivated a great deal of attention in the last few years. It is also applied to a lot of fields of applications. SVMs are used for learning classification, regression, or ranking functions. SVM is based on statistical learning theory. It is also based on the structural risk minimization principle. And have the objective of identifying the location of decision boundaries. It is also known as a hyperplane. That produces the optimal separation of classes. Therefore developing the largest probable distance between the separating hyperplane.

Additionally, the instances on the likewise side of it have been proven. SVM is the most vigorous & precise classification technique. Also, there are several problems. Also, it is as expensive as solving quadratic programming methods. That needs large matrix operations as well as time-consuming numerical computations.

The basic idea of the decomposition method is to split the variables into two parts:

- A set of free variables is called a working set. That can update in each iteration. And also a set of fixed variables. That is fixed during a particular. Now, this procedure has to repeat until the termination conditions are met

- The SVM was developed for binary classification. And it i

s not simple to extend it for multi-class classification problems. The basic idea is to apply multi-classification to SVM. It is to decompose the multi-class issues into various two-class problems.

J48 Decision Trees Data Mining Algorithms

A decision tree data mining algorithm is a predictive machine learning model. It decides the target worth of a brand new sample. It is based on a variety of attribute values of readily available information. The internal nodes of a choice tree denote the various characteristics. Furthermore, the limbs between the nodes show us the attainable values. That these characteristics can have in the noticed samples. While the terminal nodes show us the last worth of the reliant variable.

The attribute predicted is referred to as the dependent variable. Because it’s great depends on the values of all of the other characteristics. The various other characteristics help in predicting the worth of the reliant variable. Which are the impartial variables within the dataset. The J48 Decision tree classifier utilizes the following easy algorithm. To classify a brand new item, it is initially required to produce a decision tree. Which according to the attribute values of available coaching data.

And so, every time it encounters a pair of products. Then it identifies the feature which discriminates the different instances most clearly. This function can tell us the majority of information instances. So that we can classify them probably the best is believed to have probably the highest info gain. Today, among the attainable values of this particular characteristic. If there’s some significance for which there’s no ambiguity. That’s, for which the information instances fall within its class. It’s the same worth for the target adjustable. Then we stop that department and delegate to it the goal worth that we’ve obtained.

For other instances, we look for one more attribute that provides us probably the highest info gain. We continue to find a clear decision. That of what mixture of attributes gives us a specific goal value. If any of us run off attributes. If we can’t get an unambiguous outcome from the available info. We assign this particular department a goal value that the vast majority of the things under this particular branch own. Today we have the determination tree, we stick to the order of attribute choice as we’ve received for the tree.

By checking out all of the respective attributes. And the values of theirs with those observed in the determination tree model. We can assign or even predict the target worth of this new example. For other instances, we hunt for one more attribute that provides us probably the highest info gain. We continue to obtain a specific choice. That of what blend of attributes provides us a certain objective value.

Conclusion to Data Mining Algorithms

Thus, the following are the best data mining algorithms from the data mining algorithms list. We expect this specific piece of content has clarified the foundation of these data mining algorithms. Through this article, we learned about various data mining algorithms that are popularly used by professionals around the globe. With in-depth guidance, you would have gained some understanding of the different data mining algorithms.

Frequently Asked Questions

1. What are the top 5 Data Mining techniques?

The following data mining algorithms methods cater to diverse business conditions and offer a distinct insight. Understanding the kind of organizational issue that you are attempting to work out will establish the kind of data mining algorithms technique that will produce the most effective outcomes.

Below are 5 data mining algorithms strategies that will help you develop optimum results.

- Classification analysis: This evaluation can be used to access relevant and important info about information and metadata. It’s used to classify distinct details in classes that are different.

- Association rule learning: It refers to the technique which will help you recognize several intriguing associations (dependency modeling) in between various variables in huge databases.

- Anomaly or outlier detection: This describes the observation for data products in a dataset that don’t match an expected pattern or even expected behavior.

- Clustering analysis: The cluster is a set of information objects; those items are very similar within the very same cluster.

Regression analysis: In statistical terminology, a regression examination is a procedure of determining and analyzing the connection among variables.

2. How many algorithms are there in Data Mining?

Along with the 5 data mining algorithms currently being put to use prominently, others assist in mining data and understanding. It combines diverse methods such as database systems, artificial intelligence, pattern recognition, statistics, and machine learning. Each one of these assists in studying huge sets of statistics and carrying out other data analysis activities.

3. What are the six common tasks of Data Mining?

There are a variety of data mining jobs for example classification, prediction, time-series analysis, association, clustering, summarization, and so on. Each one of these things is sometimes predictive data mining algorithms assignments or descriptive information mining jobs. A data mining algorithms system can carry out one or even a lot more of the above-specified tasks included in data mining.

Predictive data mining algorithms jobs developed a model from the accessible statistics set that is ideal in predicting future or unknown values of an alternate data set of desire. A healthcare professional attempting to detect a disease depending on the healthcare test results of a patient could be viewed as a predictive data mining algorithms task.

Descriptive data mining algorithms tasks often discover data describing patterns and also come up with innovative, substantial info from the accessible data set. A retailer attempting to find items that are bought collectively should be regarded as a descriptive data mining algorithms job. Here are six common tasks:

- Classification

- Prediction

- Time – Series Analysis

- Association

- Clustering

- Summarization

4. Which software is used for Data Mining?

Here is the list of top software used for data mining:

- Sisense,

- Sisense for Cloud Data Teams,

- Neural Designer,

- Rapid Insight Veera,

- Alteryx Analytics,

- RapidMiner Studio,

- Dataiku DSS,

- KNIME Analytics Platform,

- SAS Enterprise Miner,

- Oracle Data Mining ODM,

- Altair,

- TIBCO Spotfire,

- AdvancedMiner,

- Microsoft SQL Server Integration Services,

- Analytic Solver,

- PolyAnalyst,

- Viscovery Software Suite,

- Salford Systems SPM,

- HP Vertica Advanced Analytics,

- TIMi Suite,

- Genedata Analyst,

- LIONoso,

- Teradata Warehouse Miner,

- pSeven,

- Civis Platform.

5. What are the prerequisites for Data mining?

Here are the prerequisites for data mining:

Computer Science Skills

- Programming/statistics language

- Big data processing frameworks

- Database knowledge: Relational Databases & Non-Relational Databases

Statistics & Algorithm Skills

- Basic Statistics Knowledge

- Data Structure & Algorithms

- Machine Learning/Deep Learning Algorithm

- Natural Language Processing

Others

- Project Experience

- Communication & Presentation Skills

6. Is Excel a Data Mining Tool?

Lots of individuals employ Excel to’ crunch numbers’ though it can be used as a database to store and then organize info. You can create automated sessions (called macros) for repeated treatments and you can also interface Excel with various other programs to draw out information for easier analysis.

And so, our information mining operation may be searching through items kept in Excel like inventory prospect lists, payroll figures, information from the accounting method, sales information, etc. We might be searching for associations between various pieces of information, patterns, and fashion.

Perhaps we can better figure out who our target buyer is and what sorts of things they would like buying so we can easily tailor our marketing appropriately. If we recognize what our customers’ preferred products are, we can arrange to have much more available and order them much more quickly, therefore we do not run short.

Data mining algorithms are commonly used today because companies have become a lot more centered on the consumer – in case they do not satisfy the buyer, the buyer has a lot more options for sourcing things than previously

7. How long would it take to be up and running with Data Mining?

As a rule of thumb, we generally suggest trying to have information for no less than three months. Based on the run time of a one-time course of action instance it can be better to have information for up to a season. For instance, in case your method usually requires 5-6 months to finish (think associated with a public structure permit system), a 3-month-long sample won’t get you still one full process instance.

Thus, it really will depend on just how long a situation in your procedure is usually running. You would like to get a representative range of cases and also you have to keep a bit of space to catch the typical few long-running instances as well.

If you’re still not sure just how much data you have to extract, do the next formula depending on the expected throughput time for the process:

Timeframe = Anticipated case completion period 4 * 5

The baseline is the expected procedure completion time for a regular situation. The four guarantees you’ve as much information as you can see 4 cases which were started as well as completed after one another (of course there’ll be others within between). The five accounts for the rare long-running instances (20/80 rule) and also make certain you see cases that take up to 5 times more in the extracted precious time window.

8. How R tools are used for Big Data Mining?

A rattle is a GUI application that employs the R stats programming language. Rattle exposes the statistical strength of R by providing extensive data mining algorithms functionality. Although Rattle has a well-developed and extensive UI. Also, it’s a built-in log code tab that creates duplicate code for any pastime taking place at GUI. The dataset produced by Rattle may be viewed also as edited. Rattle provides the extra facility to go through the code. Additionally, work with it for many purposes as well as extend the code with no restriction.

9. What kind of data is needed for Data Mining?

In concept, data mining isn’t particular to one type of data or media. Data mining algorithms must be relevant to any type of info repository. Nevertheless, approaches and data mining algorithms might differ when used to various data types. The problems provided by various data types differ significantly. Data mining algorithms are now being placed into use and learned for directories, which include relational databases, object-oriented databases.

Also, object-relational databases, information warehouses, transactional directories, are semi-structured. And unstructured repositories including the World Wide Web, complex databases like spatial directories, multimedia directories, textual databases, and time-series databases, as well as flat files.

10. What is Cluster in Data Mining?

Clustering is the procedure of creating a group of abstract objects into classes of comparable items. A cluster of information items could be managed as one group. While carrying out cluster analysis, we initially partition the set of data into groups according to data similarity and after that assign the labels to the groups. The primary benefit of clustering over the classification is that it’s adaptable to modifications and also allows single out beneficial characteristics that distinguish different groups.

2 responses to “Complete Guide on Data Mining Algorithms | DataTrained”

[…] Data Mining is very important for both AI and Human Intelligence. You can imagine how much easier our lives would be if machines could do tasks identical to those performed by the human brain. Are you still debating whether or not artificial intelligence can match human intellect? We are certain that you will find the answers to your queries by reading our blog. […]

[…] in the Python array article area, and one of our experts will respond. You can also check out our Data Mining Algorithm Blog. Happy […]